Early Childhood Learning Assessment

So what does learning assessment mean in early childhood?

The team have literally spent months agonising over this issue (trying to conceive the best approach for our product LIFT). We have had heaps of input from a number of services trialling and already using LIFT and we were not that surprised by the inconsistency and problems surrounding this issue. For many years now our own service has been utilising an offline version of LIFT in conjunction with a variety of other supporting assessment techniques (mostly check-lists) but our approach, just as we observed when we consulted with many other services, was haphazard and disconnected. It seemed that the advice out there too was just so variable and contradictory. The overwhelming question that keeps being asked is: "Why are we doing this this way?"

Getting back to basics

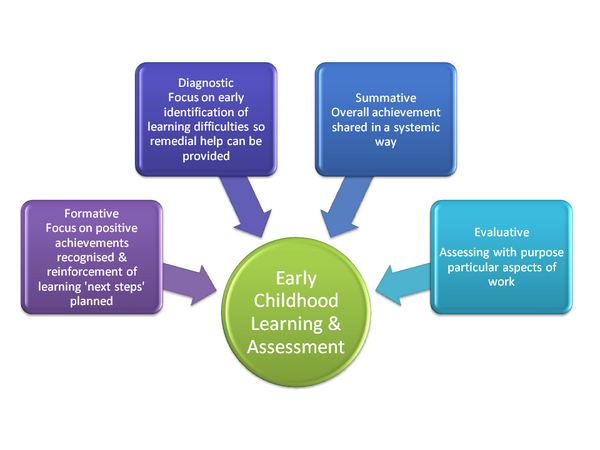

The next step was to identify assessment techniques and tools and evaluate their use and importance. We found a myriad of approaches, however we identified four key assessment categories typically used in early childhood learning assessment (see our illustration below).

Formative assessment

Formative assessment is what we typically see in most early childhood services today. It is the process by which a teacher observes a child or children learning and develops strategies & new learning opportunities that will further promote new and/or extend learning.

E.g. OBSERVE - PLAN - OBSERVE - PLAN..etc. etc.

What became obvious when reviewing this aspect of early childhood programming was that too often this approach is used without planning for the next steps (eg. observations taken with no outcome). Sometimes this may be valid, particularly when we are building up a portfolio of observations about a particular issue or behaviour, however more often than not many early childhood operators observed but rarely connected their observations to the next steps and even more rarely evaluated whether a desired learning outcome or objective was achieved, although we will discuss evaluation later in this blog.

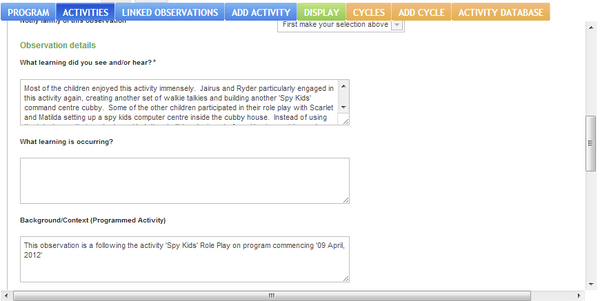

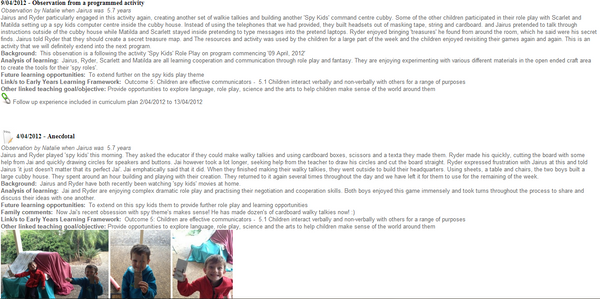

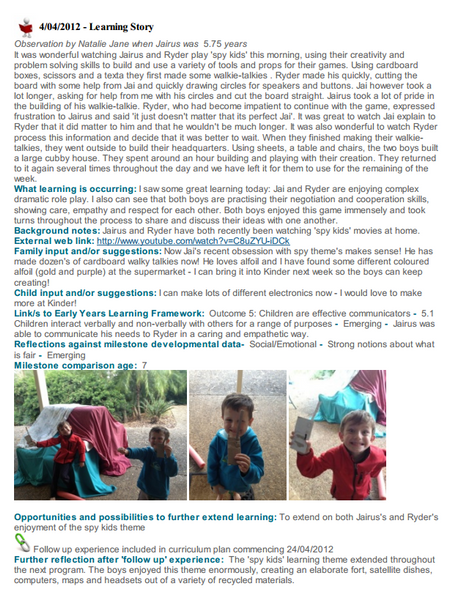

What we found most interesting was the emerging criticism of the use of 'learning stories'; and we wish to point out that this criticism is more about the way in which some teachers are using 'learning stories', rather than about the technique itself. The concern seems to be that there are many wonderful and pretty stories being created but are teachers answering the question "So What?" (I can quote numerous recent examples of early childhood educators, including Kathy Walker and more recently a representative from the DEECD who argue this). I must say that we also agree. Learning stories can be a trap and an incredible time waster, if not applied effectively. This is the reason why in our product LIFT we ask only three questions which we believe can be translated across a huge variety of observational techniques (from traditional more formal programming to learning stories and emergent curriculums):

1. What learning did you see and/or hear?

2. What learning is occurring?

3. What are the next steps/future learning opportunities?

The take away message is to avoid the trap of observing for the sake of 'observing' with now clear defined 'next steps'.

Diagnostic assessment

For most early childhood teachers, this primarily means check-lists, but can occasionally (when working with medical practitioner) mean more detailed and extensive diagnostic tools and testing.

So when it comes to finding the right check-list it can be very daunting. There are just so many check-lists out there - Whose forms do you use? The universities and educational institutions often have different check-lists, but their focus changes and there are inconsistencies. If you go online, you can find various check-lists from all sorts of providers including government, toy providers etc., but again they are all different and most have copyright issues requiring early childhood services to purchase their forms to use them practically in their services.

Early this year our team attended the KPG conference in Melbourne and there was a strong push from one of the presenters to connect with our local maternal health providers as there is a wealth of resources and support available to providers through these services. This prompted our team to investigate this and we came upon pre-screening checks in PEDs (PEDSTest.com) that can be used prior to conducting a standardised developmental assessment screen, which are used by numerous maternal health nurses and peditricians around Australia. We also came across an amazing website called 'Ages & Stages' http://www.agesandstages.com/ offering a paediatric screening service tool for medical practitioners, but this is likely to be too costly for most early childhood services. It will be interesting to await resources that are released under the EYLF (Early Years Learning Framework), but until then services will need to resource their own tools. We will keep you all posted if we find out any further progress in this area.

Having found the right tool to use, the next question was how to use this tool. The key question we were faced with was "do we integrate this tool into our daily formative assessment & planning process or do we undertake the check-lists independently (eg. tick the box in a list). Overwhelmingly we found you have to do both, leaning and drawing heavily upon families to participate in the process and inform you of their child's progress which for the most part they should be able to readily assist teachers with. The challenge here (albeit not a challenge in LIFT) is to somehow effectively collaborate with families to complete these assessments. Prior to LIFT our service implemented quarterly parent teacher interviews and our team relied heavily on sitting down with families and working through various checklists. Obviously now with LIFT, parents are participating online and can see and participate in reporting and assessment as it occurs. We would recommend that if collaboration with families is challenging for your service, that you use diagnostic tools sparingly and only when it is clear a concern about development may be valid. In our opinion, for the majority of children who are developing normally, diagnostic assessments should not be a focus for their learning assessment.

Summative assessment

Summative assessment is essentially summarised reporting of a child's progress and achievements. There are endless ways this is completed and it is a personal style (from letters to families, school reports to folios), however, some reporting formats are mandatory such as production of 'Transition Statements' which are forwarded onto primary teachers by Kindergartens immediately prior to starting school.A note about LIFT (Learning Involving Families & Teachers)

Being an educational leader in an early childhood service is a hugely challenging role, particularly in light of changes to curriculum that have occurred over the last two years with implementation of the new National Quality Standard and the Early Years Learning Framework.

Being an educational leader in an early childhood service is a hugely challenging role, particularly in light of changes to curriculum that have occurred over the last two years with implementation of the new National Quality Standard and the Early Years Learning Framework.

Turning Differences into Opportunities

Turning Differences into Opportunities